Government agencies face rising demand and flat budgets, and while generative AI can help, successful adoption depends on whether the workforce has the human capability to interpret, review, and correct AI outcomes where it matters most.

Key Takeaways:

- Original Intelligence (OI) is the missing layer in government AI adoption. While AI handles efficiency, OI is the human capacity to generate insight and judgment beyond AI's statistical center. Without it, automation risks producing uniform answers for non-uniform human realities.

- Originality can be measured and managed. Hupside's Hupchecker platform produces four key metrics: OIQ, OIQ Types, POVI, and ROVI. Together, these allow leaders to match people to roles, design better training, and monitor for AI overreliance.

- Autonomy determines whether originality creates value. High OIQ paired with low autonomy signals frustration and underutilization. Aligning originality capability with role-level discretion is what maximizes both differentiation and AI return on investment.

- AI transformation fails when leaders mistake tool adoption for value creation. Government modernization often prioritizes platforms and procurement while underinvesting in the workforce alignment that determines real outcomes: who interprets AI outputs, who handles exceptions, and who stays accountable when automation fails.

The Need for Novelty

With AI-enabled government, the tech stack includes four components: data, infrastructure, AI systems, and Original Intelligence. As AI dominates the first three layers, OI is the defining source of differentiation.

A structural feature of generative is output convergence. When widely used, AI pushes responses toward a statistical center, reducing variation in how problems are framed and solved. The risk: uniform answers being applied to non-uniform human realities.

Original Intelligence is the human capacity to generate insight and decisions beyond AI’s statistical center.

It is not creativity as a personality trait, and it is not a cultural aspiration. Instead, it is operational originality, such as the ability to reframe problems, make unexpected connections, and exercise sound judgment under ambiguity.

Organizations that suppress or fail to deploy human originality may achieve short-term efficiency gains, but the relationship between government and its citizens will suffer.

Due to the inherent limitations of AI, Original Intelligence will always be required in the portion of government service that requires real differentiation. What will differ is the degree and style in which each individual’s OI capabilities match their role.

Measuring Original Intelligence

Originality cannot be managed if it is not measured. Traditional performance metrics cannot distinguish between human originality and AI-assisted output. Hupside’s Hupchecker is the first Original Intelligence discovery infrastructure platform that measures how individuals generate originality when AI is available.

Put another way, it identifies individuals who expand beyond AI’s baseline.

Hupchecker produces four primary outputs:

- Original Intelligence Quotient (OIQ)

- OIQ Types

- Personal Originality Value Intensity (POVI)

- Role Originality Value Intensity (ROVI)

Understanding an individual’s competency in these areas allows for multiple benefits in the context of using Original Intelligence to enhance differentiation, including:

- Matching individuals with job roles and responsibilities to enhance value creation opportunities, with or without using AI.

- Matching training with individual originality approaches to drive higher AI adoption rates and more discriminating use.

- Providing a sense that individuals are being valued, rather than as an asset to be substituted for. AI becomes an “and” rather than an “or” conversation.

- Matching data governance and use structures to an individual’s AI competencies.

- Monitoring performance for AI overreliance and homogenized output.

Predicting Performance

The Original Intelligence Quotient (OIQ) is a direct predictor of an individual’s key attributes for performance in an AI-powered organization. It measures:

- Predilection to create differentiable output.

- Likelihood of using AI, and other tools, to create differentiable output.

- Tolerance for ambiguity, optimism, self-reliance and resilience.

- Consistency in performance across varying roles.

High-OIQ individuals expand conceptual boundaries; lower-OIQ individuals align more closely with AI-derived output. Crucially, OIQ captures how people use AI, not whether they use it. Individuals who treat AI as an accelerant to their own thinking demonstrate higher OIQ than those who accept AI output verbatim.

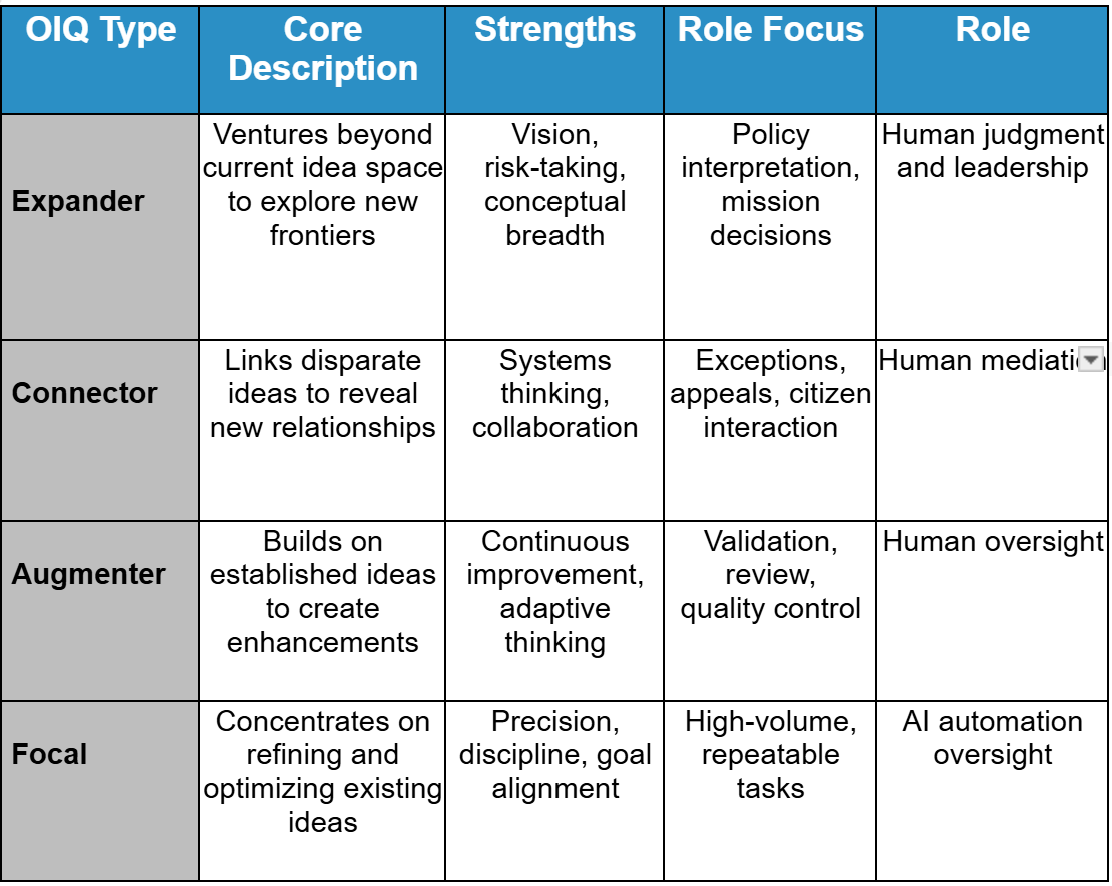

Using OIQ scores, we can get to a level of specificity to allow for more effective team building, training and job matching in a post AI environment. This occurs by identifying how an individual demonstrates OI through a distinct cognitive pattern: an OIQ Type.

Hupchecker identifies 4 distinctive human patterns for applying OI in their role performance. The four primary OIQ Types are provided below:

OIQ and OIQ types together set up a baseline that predicts individual adaptability to using AI and applying it under AI-assisted conditions. It also surfaces role-specific risk patterns:

- Expanders may overextend AI use without guardrails.

- Connectors may over-integrate outputs without structure.

- Augmenters may underutilize AI’s creative potential.

- Focals may accept AI outputs too uncritically.

These risks can be mitigated through pairing, training, and governance design.

Autonomy as Force Multiplier

When deploying AI in a group, additional measurement is needed. Original Intelligence only creates value when individuals have sufficient autonomy to exercise it.

Role Originality Value Intensity (ROVI) measures the expected autonomy of a role—the level of discretion and responsibility embedded in it.

In general, someone who works on their own without supervision has a higher degree of autonomy than someone who works in a group, and a senior leader has more ability to influence an organization than an entry level team member. This provides a baseline for the expected autonomy of an individual based on their role.

Personal Originality Value Intensity (POVI) measures the autonomy an individual actually experiences within their organizational culture.

In general, someone who works on their own without supervision has a higher degree of autonomy than someone who works in a group, and a senior leader has more ability to influence an organization than an entry level team member. This provides a baseline for the expected autonomy of an individual based on their role.

Together, ROVI and POVI reveal whether originality is being enabled or constrained.

- High OIQ + low autonomy signals frustration and underutilization.

- High autonomy + low OIQ signals quality risk.

- Strong alignment maximizes differentiation and AI return on investment.

When combined with OIQ, these measures provide a clear picture of an individual’s opportunity to create value within their role:

- OIQ vs. POVI: Is originality capability being enabled or constrained?

- OIQ vs. ROVI: Is originality capability aligned with the role’s differentiation demands?

SAMPLE USE CASES

1. Cost-to-Serve Without Trust Loss: Automate routine tasks while staffing escalation layers with high-OIQ mediators.

2. Appeals and Error Mediation: Deploy Connectors and Expanders to interpret context and resolve disputes quickly.

3. Enforcement and Administrative Discretion: Use OI as a predictor of responsible judgment under ambiguity.

4. Distributed Decision-Making: Identify individuals resilient under incomplete information and high consequence.

Establishing an AI Readiness Baseline

By baselining OIQ, OIQ Types, POVI, and ROVI across units, organizations can establish an AI Readiness Index.

This allows leaders to:

- Predict early adopters and likely resistors

- Identify where autonomy loss may trigger disengagement

- Staff escalation and discretionary roles intentionally

- Target governance where AI overreliance risk is highest

- Design cross-type teams to accelerate learning.

For example:

- High OIQ with high autonomy = natural early adopters; suited for complex discretionary roles with governance oversight.

- High OIQ with low autonomy = likely frustrated; may require role redesign or greater responsibility.

- Mid OIQ with constrained autonomy = benefit from structured AI training and defined guardrails.

- Low OIQ with high autonomy = require stronger review loops and quality controls.

- Low OIQ with low autonomy = best positioned in structured, repeatable workflows with clear guidance.

AI transformation fails when leaders mistake tool adoption for value creation. Government modernization often prioritizes platforms and procurement while underinvesting in the workforce alignment that determines real outcomes: who interprets AI outputs, who handles exceptions, and who remains accountable when automation fails.

IMPLEMENTATION MADE EASY

Implementation can be broken into four easy phases:

1. Benchmark: Establish OIQ, OIQ Types, POVI, and ROVI.

2. Pilot: Deploy AI in structured workflows while staffing human escalation layers.

3. Align: Adjust autonomy, training, and review loops.

4. Sustain: Reassess periodically to avoid homogenization and overreliance.

Conclusion

Generative AI can improve throughput and reduce cost-to-serve. But public service does not exist to maximize output alone. It exists to deliver fair, accountable, and context-aware outcomes. As automation expands, agencies must intentionally preserve a human mediation layer capable of interpreting nuance, correcting errors, exercising discretion, and operating responsibly under ambiguity.

This is not an argument against AI. It is an argument for alignment.

Original Intelligence is the capability that ensures efficiency does not come at the expense of legitimacy. By measuring originality and autonomy prospectively, government leaders can predict adoption patterns, design escalation layers intentionally, prevent homogenization risk, and deploy AI in ways that strengthen rather than weaken public trust.

In the age of AI, government effectiveness will not be defined by how much is automated, but by how deliberately human judgment remains in the loop.