There are two durable ways to improve margins: reduce costs through efficiency or increase pricing power through differentiation. Generative AI accelerates the first, but Original Intelligence is what drives the second.

Key Takeaways:

- AI alone is unlikely to provide durable competitive advantage for most firms. Because generative AI is widely accessible and trained on broadly available data, competitors deploying similar systems will see their outputs converge.

- Original Intelligence is the scarce asset that creates pricing power. This is operational originality: the ability to reframe problems, synthesize unexpected connections, and exercise sound judgment under ambiguity. Scarcity is what sustains pricing power, and in an AI-saturated environment, originality is what's scarce.

- Hupchecker measures four dimensions of originality to activate both margin levers at once. OIQ, OIQ Types, POVI, and ROVI allow organizations to match talent to roles, design teams that amplify human creativity, and monitor for AI overreliance, all while using AI aggressively to remove friction and cost.

- Without deliberate alignment, organizations that treat AI as a strategy in itself will homogenize. Those that pair AI's cost-reduction power with measured, role-aligned Original Intelligence will capture efficiency gains without losing distinctiveness.

How Do Generative AI and Original Intelligence Work Together?

Every business transformation ultimately rises or falls on one outcome: margin improvement. There are two durable ways to improve margins: reduce costs through efficiency or increase pricing power through differentiation.

Generative AI has dramatically accelerated the first lever. It automates tasks, shortens timelines, and lowers labor costs. These gains are real. But as AI becomes ubiquitous, its efficiency advantage will level out.

Because generative AI is widely accessible, it tends to produce similar outputs across organizations, weakening differentiation. Without deliberate training and role alignment, human teams risk blending into that sameness rather than rising above it. In the AI era, success will hinge on an organization’s ability to maintain and expand differentiation while using AI.

That depends on human capability. Agencies must have the expertise to interpret AI outputs, spot exceptions, and correct errors when automation falls short.

We call this Original Intelligence. While generative AI increases efficiency, Original Intelligence drives profitability.

By deliberately pairing AI’s cost-reduction power with Original Intelligence—the human capacity to create value competitors cannot easily replicate—margins will grow.

Hupside’s Hupchecker was designed to operationalize that pairing. It enables organizations to identify and measure human-derived novelty. By doing so, they can better align talent, roles, and operating models to activate both margin levers simultaneously.

Producing Durable Profit Gains

The AI-enabled enterprise rests on four components: data, infrastructure, AI systems, and Original Intelligence.

AI increasingly dominates the first three layers. As model performance improves, analytical and executional work becomes automated. But AI systems share a structural limitation: they are trained on broadly available data and increasingly on AI-generated output. Over time, novelty is absorbed and redistributed. Differentiation becomes shared.

The predictable result is convergence. As competitors deploy similar systems, efficiency advantages flatten, and outputs homogenize. AI alone is unlikely to provide durable competitive advantage for most firms. True novelty originates from people.

Original Intelligence is the human capacity to generate insight and decisions beyond AI’s statistical center.

It is not personality-driven creativity. Instead, it is operational originality: the ability to reframe problems, synthesize unexpected connections, and exercise sound judgment under ambiguity. It is measurable. And in an AI-saturated environment, it becomes scarce. Scarcity creates pricing power.

Amplifying Human Creativity

Going forward, organizations must optimize the relationship of OI and AI to maximize return on investment. When organizations integrate AI for cost reduction while embedding Original Intelligence into differentiation-critical roles, the technology morphs into a margin strategy.

What’s more, when organizations systematically measure originality capability using the Hupside framework, they can design teams and workflows that amplify human creativity and ensure that AI augments rather than replaces OI.

Traditional performance metrics cannot distinguish between human originality and AI-assisted output. Hupside’s Hupchecker is the first Original Intelligence discovery infrastructure platform that measures how individuals generate originality when AI is available.

Put another way, it identifies individuals who expand beyond AI’s baseline.

Hupchecker uses short, engaging problem-solving challenges to identify individual original competencies and approaches. Then, it produces four primary outputs:

- Original Intelligence Quotient (OIQ)

- OIQ Types

- Personal Originality Value Intensity (POVI)

- Role Originality Value Intensity (ROVI)

Understanding an individual’s competency in these areas allows for multiple benefits in the context of using Original Intelligence to enhance differentiation, including:

- Matching individuals with job roles and responsibilities to enhance value creation opportunities, with or without using AI.

- Matching training with individual originality approaches to drive higher AI adoption rates and more discriminating use.

- Providing a sense that individuals are being valued, rather than as an asset to be substituted for. AI becomes an “and” rather than an “or” conversation.

- Matching data governance and use structures to an individual’s AI competencies.

- Monitoring performance for AI overreliance and homogenized output.

Predicting Performance

The Original Intelligence Quotient (OIQ) is a direct predictor of an individual’s key attributes for performance in an AI-powered organization. It measures:

- Predilection to create differentiable output.

- Likelihood of using AI, and other tools, to create differentiable output.

- Tolerance for ambiguity, optimism, self-reliance and resilience.

- Consistency in performance across varying roles.

High-OIQ individuals expand conceptual boundaries; lower-OIQ individuals align more closely with AI-derived output. Crucially, OIQ captures how people use AI, not whether they use it. Individuals who treat AI as an accelerant to their own thinking demonstrate higher OIQ than those who accept AI output verbatim.

Using OIQ scores, we can get to a level of specificity to allow for more effective team building, training and job matching in a post AI environment. This occurs by identifying how an individual demonstrates OI through a distinct cognitive pattern: an OIQ Type.

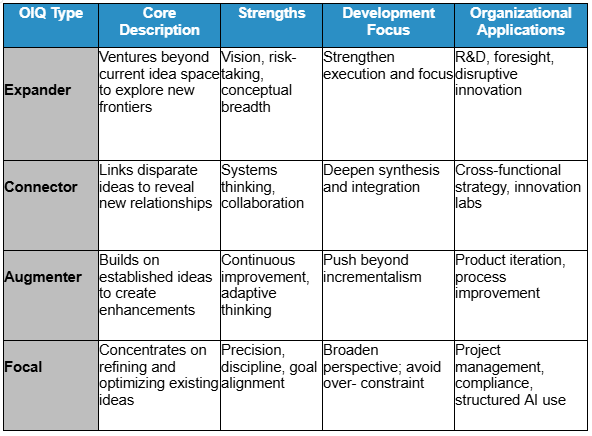

Hupchecker identifies 4 distinctive human patterns for applying OI in their role performance. The four primary OIQ Types are provided below:

OIQ and OIQ types together set up a baseline that predicts individual adaptability to using AI and applying it under AI-assisted conditions. It also surfaces role-specific risk patterns:

- Expanders may overextend AI use without guardrails.

- Connectors may over-integrate outputs without structure.

- Augmenters may underutilize AI’s creative potential.

- Focals may accept AI outputs too uncritically.

These risks can be mitigated through pairing, training, and governance design.

Autonomy As Force Multiplier

When deploying AI in a group, additional measurement is needed. Original Intelligence only creates value when individuals have sufficient autonomy to exercise it.

Role Originality Value Intensity (ROVI) measures the expected autonomy of a role—the level of discretion and responsibility embedded in it.

In general, someone who works on their own without supervision has a higher degree of autonomy than someone who works in a group, and a senior leader has more ability to influence an organization than an entry level team member. This provides a baseline for the expected autonomy of an individual based on their role.

Personal Originality Value Intensity (POVI) measures the autonomy an individual actually experiences within their organizational culture.

In general, someone who works on their own without supervision has a higher degree of autonomy than someone who works in a group, and a senior leader has more ability to influence an organization than an entry level team member. This provides a baseline for the expected autonomy of an individual based on their role.

Together, ROVI and POVI reveal whether originality is being enabled or constrained.

- High OIQ + low autonomy signals frustration and underutilization.

- High autonomy + low OIQ signals quality risk.

- Strong alignment maximizes differentiation and AI return on investment.

When combined with OIQ, these measures provide a clear picture of an individual’s opportunity to create value within their role:

- OIQ vs. POVI: Is originality capability being enabled or constrained?

- OIQ vs. ROVI: Is originality capability aligned with the role’s differentiation demands?

Establishing an AI Readiness Baseline

By baselining OIQ, OIQ Types, POVI, and ROVI across units, organizations can establish an AI Readiness Index.

This allows leaders to:

- Predict early adopters and likely resistors

- Identify where autonomy loss may trigger disengagement

- Staff escalation and discretionary roles intentionally

- Target governance where AI overreliance risk is highest

- Design cross-type teams to accelerate learning.

For example:

- High OIQ with high autonomy = natural early adopters; suited for complex discretionary roles with governance oversight.

- High OIQ with low autonomy = likely frustrated; may require role redesign or greater responsibility.

- Mid OIQ with constrained autonomy = benefit from structured AI training and defined guardrails.

- Low OIQ with high autonomy = require stronger review loops and quality controls.

- Low OIQ with low autonomy = best positioned in structured, repeatable workflows with clear guidance.

OI in Action: Consulting Use Case

For consulting firms, the AI moment represents both risk and a category-defining opportunity. Clients are no longer asking whether to adopt AI; they are asking why their investments have not produced durable advantages and how to avoid becoming interchangeable with competitors using the same tools.

The first wave of advisory—pilots, tooling, and implementation—is largely complete. The mandate has shifted to value capture, with boards demanding clear links between AI investment, margin expansion, and defensible differentiation. Firms positioned solely around implementation risk commoditization.

At the same time, AI has flattened traditional consulting differentiation. Frameworks, analyses, and strategic narratives are increasingly shaped by the same models clients can access themselves. As capabilities converge, efficiency-led engagements compress fees and shorten value windows. Speed and polish are no longer premium. Strategic relevance now depends on helping clients preserve what AI erodes: originality, judgment, and pricing power.

This challenge is compounded by talent blind spots. AI performance depends not just on the tools deployed, but on who shapes and interprets them. Without objective ways to identify individuals who expand the idea space, organizations default to hierarchy or tenure—leading to stalled adoption and homogenized output.

As AI absorbs more analytical work, the highest-value consulting shifts upstream to designing operating models where AI drives efficiency and humans drive differentiation. Firms that can measure and operationalize Original Intelligence gain a defensible advisory position anchored in measurable advantage.

Change Management Made Easy

Successfully integrating AI requires a structured, human-centered change process that aligns technology adoption with measurable gains in differentiation and performance:

Stage Setting: Set the foundation by defining human-centered principles, clarifying the risks of AI-driven sameness, and positioning AI as a tool for value expansion—not labor substitution.

Prepare: Clarify business outcomes, define governance, and establish a baseline understanding of organizational originality before deployment begins.

Pilot: Launch targeted pilots with high-OIQ talent, build augmentation capabilities, and benchmark learning through structured reassessment.

Scale: Intentionally design mixed-profile teams, codify role-based success standards, and reinforce originality through measurement and shared practice.

Sustain: Continuously reassess originality metrics, retain and develop high-impact talent, guard against AI bias, and link results to measurable business outcomes.

Leading indicators

- Adoption by workflow

- Time-to-proficiency

- Shifts in OIQ and idea-space diversity

- Employee trust and engagement

- Originality included in hiring and promotion

Lagging indicators

- Cost-to-serve improvements

- Differentiated revenue growth

- Retention of high-impact talent

- AI-enabled EBIT contribution

Conclusion

The bottom line is that generative AI makes work faster, cheaper, and more uniform. As uniformity rises, originality becomes scarce, and scarcity is what sustains pricing power.

The organizations that win in the AI era will not treat AI as a strategy in itself. They will use it aggressively to remove friction and cost, while deliberately embedding Original Intelligence into the roles, decisions, and moments that define differentiation. In doing so, they activate both margin levers at once: lower costs and higher willingness to pay.

When originality is measured and matched to roles deliberately, organizations can predict who will use AI in value-expanding ways, redesign work to protect differentiation, sequence change to avoid homogenization, and capture efficiency gains without eroding distinctiveness.